Making Sense of the AI Storm

Making Sense of the AI Storm

Everyone had an AI story this year at CES 2026, held from January 6-9, 2026, in Las Vegas, from the largest heavy equipment manufacturers to the humble toothbrush. The Consumer Technology Association’s annual Tech Trends presentation on January 4, 2026, said 63% of the U.S. population has used AI at their jobs, resulting in an estimated 8.7 hours of work saved per week.

Presenters of the report went on to note the growth of Agentic AI, Vertical AI, Industrial AI and the larger sector of Physical AI, combining machine intelligence with hardware for autonomous vehicles, specialized robots fine-tuned for specific tasks, and humanoid robots which are being pitched to replace human beings in warehouses and on the factory floor.

However, not everyone was sold on the hype. Tim Albright, President of AvNation TV and long-time CES attendee, went so far as to call the world’s premiere event on technology as “AI Drunk” with overload and misuse of the term across products, applications, and services.

For example, this reporter saw several major international industrial brands promote the use of AI as the solution for better family cooking, so long as one had bought into an intelligent appliance ecosystem including refrigerators loaded with cameras using image recognition to keep track of groceries stored within and then going to the cloud for suggesting recipes based upon what was available and taking into account which items were reaching the end of their shelf life. It was an impressive demonstration to reduce food waste and enable busy families to get the most out of their groceries, but not affordable for most households who didn’t have the substantially more expensive devices and no urgent need to replace their existing kitchen appliances.

Yet one cannot deny the march of AI and its ongoing attribute to drive the need for reliable high-speed, low-latency broadband in any number of sectors large and small, sometimes in evolutionary ways and quietly revolutionary in others. The current wave of AI merging into today’s economy is not Artificial General Intelligence (AGI) but applied intelligence by using AI to improve productivity and enhance safety, with applications within and between at the edge, the cloud, and orchestration of edge, devices, and the cloud together.

Big Iron Meets GPU Power

Caterpillar, Doosan Bobcat, and Oshkosh promoted their advancements of incorporating AI into their products, with some features almost passe’ while others could significantly enhance productivity and safety. Caterpillar noted in its CEO keynote that less than 5% of the U.S. workforce is employed in construction, yet the industry accounts for over 20% of workplace fatalities. Citing statistics from the Association of Equipment Manufacturers, Bobcat said 40% of equipment operators are expected to retire by 2031, creating the need to train and support the next generation of workers. Oshkosh went a step further on the CES exhibit floor, illustrating how humans can be replaced with robots in operations such as driving support vehicles and welding.

Today’s robotic welder doing away with the human in a bucket crane could be tomorrow’s robotic aerial cable

worker, according to Oshkosh. Source: Doug Mohney

Bobcat’s driver display, shown here on the top half of the picture, is able to highlight the location of underground utilities using geolocation

data for damage protection purposes. Source: Doug Mohney

Bobcat’s approach to AI is incremental, leveraging continuous investments in telematics and electronics to bring assistance to workers of any skill level into the driver’s seat. Its Jobsite Companion will provide in-cab training and support while voice-activated controls enable workers of all skill levels to quickly customize machine settings for specific jobs and tools instead of having to dig through manuals and menus. The goal is not to replace operators in the driver’s seat, but to help new ones better and more quickly do their jobs.

The Bobcat cab window is now equipped with a digital display that overlays jobsite visuals with critical data, including the ability to designate “keep out” areas and to overlay utilities information. Network construction firms will no doubt be intrigued about being able to display job plans and show where buried utilities lie through the operator’s display, marking such areas as “no go/no dig” zones and tying it in with the onboard collision warning system to slow or stop machinery before it damages something.

Recognizing that automation will shift the nature of the construction industry, Caterpillar announced it is committing $25 million to strengthen the workforce behind the “invisible layer” of AI and automation, funding training, education, and partnerships to help people transition into the new technology roles being created by the application of the latest technologies.

Fiber-Deploying Robots?

Oshkosh is another big iron firm that is tying its vehicles into AI and broadband. The company builds a range of machines including elevated work platforms, purpose-built vehicles for defense, firefighting, refuse collection, and aviation ground support. Its latest announcements at CES lean into analytics, autonomy, and robotics.

“Everything we build today is connected in some way, shape, or form,” said Chad Smith, Senior Director of Engineering, Oshkosh. “We have telematics solutions. Clear Sky and IOPS are our customer portals to all the data coming from their vehicles. They could see health and usage and all that [information].”

AI is showing up on Oshkosh firefighting vehicles to provide enhanced safety, with its Collision Avoidance Mitigation System (CAMS), providing warnings for firefighters of approaching vehicles when working at active roadways.

“Unfortunately, firefighters, tow truck operators, they get hit on the side of the road a lot,” said Smith. “The goal of the system is to identify if a car is going to get hit by a vehicle, it’s an alarm to give them a warning.”

CAMS uses radar, computer vision, and AI to detect, classify and track oncoming traffic, continuously evaluating speed, trajectory, and proximity in relationship to the parked vehicle. Oshkosh plans to scale the system for ambulances, police, and tow truck operators, with future evolutions including a mobile unit that can be setup on highways and dark shoulders.

For airport operations, Oshkosh showed how robotics and autonomy increase efficiency, especially when humans are at risk. “Landing at the airport, there’s all these things that have to occur for that airplane to get unloaded, loaded, and back in the air,” said Smith. “There’s an orchestra of machines that all have to work at the same time around the airport. When there is bad weather and lightning, planes can operate and land, but people can’t be on the tarmac. Robots can. We’re showing how we’re using moments of autonomy to make these tough jobs a lot easier.”

A demonstration of automated bridge welding work opened deeper questions for construction firms. Typically, a human goes up in a basket and performs a job at height, which can be uncomfortable at the best of conditions. Oshkosh demonstrated how a robot could do the job more quickly and at substantially less risk to personnel.

But if you can weld with a robot, could you hang aerial fiber with a robot?

“Absolutely,” said Smith. “It’s all about the job. We need to identify the jobs that need to be done, where we can bring automation to make that job easier or faster. Welding is an example we are demonstrating. We’ve announced an investment in a company that does drywall and paneling.”

Oshkosh is working with construction companies today to understand where robots can replace humans for difficult jobs that are labor intensive and potentially hazardous. The person in the bucket truck is replaced by an end-effector at the end of a boom, with the operator not replaced, but on the ground supervising and monitoring the work.

Smith did not have time to discuss how long it would take to produce a robot to deploy aerial cable or the advantages of such a device compared to manual deployment, but there could be significant advantages in terms of comfort, speed, and safety for putting fiber up poles with a machine and the fine-skill connector or splicing work still done by humans — for now.

Smart Glasses to Multiple Streams: Imaging’s Broad Steady Growth

Another area driving broadband capacity upward both upstream and downstream is the continued growth of devices and applications that use and process imagery in many different forms.

Techspontential President Avi Greengart was bullish on the latest generation of smart glasses being shown on the CES exhibit floor by numerous vendors, despite the need for vendors to mature applications and privacy concerns. “The Ray Ban Metas [glasses], they’re selling in the millions,” he told this reporter and analysts in a post-show podcast. “They keep on expanding production capacity. They’re talking tens of millions now. That’s a fully mainstream product.”

Greengart experimented with a number of image capture wearable devices during and after his time on the CES show floor, including a note taking device and an always-on “lifeblogging” device, both which used AI to provide summaries and understand context. “There are really some good use cases for some of these devices,” he said. “And there are the AI companions that sit on your desk and sort of annoy you. I saw both.”

High-speed broadband is the essential utility for supporting the cornucopia of new devices flowing into the marketplace, with gigabit speeds indispensable for users to upload audio, still photo, and video imagery to the cloud for AI processing, real-time editing, and storage along with cloud-based AI assistants to provide additional features.

In the AI + more streaming data category, several vendors rolled out 8K cameras with onboard and cloud-based AI features. Dreame’s newly announced 8K resolution LEAPTIC camera incorporates onboard AI for gesture control, so users can start and stop recordings and switch modes by simply raising a hand, adaptive audio, and in connection with the cloud, provide enhanced camera assistance and visual editing. The camera has also been integrated into a drone, providing another avenue for broadband.

From a broadband standpoint, the thumb-sized camera can collect more than 200 minutes of 8K video and the device can be worn and configured to record video at pre-set times for video blogging and other applications. Recording 8K compressed video creates 14 to 16 GB in a minute, an amount which would take 15 to 20 minutes to upload at 100 Mbps but only 1.5 to 2.5 minutes at Gbps speeds, according to estimates on various websites.

A similar 8K camera from Insta360, the x5, promised around the same battery run time and boasted of three onboard AI chips to provide enhanced video sampling, noise reduction, and optimization for night and low light conditions at up to 60 frames per second. The onboard AI will also automatically remote the selfie stick from footage while cloud-based editing enables quick turnaround for bloggers and other users.

Small form factor high-resolution

8K video cameras, such as the

Insta360 x5 shown here, in

combination with onboard and

cloud-base AI services are one

of the many broadband drivers

emerging from CES 2026.

Source: Insta360

While some exhibitors delivered expanded video collection via Smart Glasses and will generate more data at higher resolution with the assistance of device-embedded and cloud-based AI assistants to change views and angles for the best presentation, others were embracing a path of blending together multiple video streams to create new entertainment.

Canon and its partners are experimenting with volumetric video, using multiple cameras to record multiple simultaneous video streams to capture high-quality 3D data that can be used for converting an entire venue into an experience where you can “see” the action from any angle or viewpoint. Volumetric video can be used for 3D AR and VR applications as well as event viewing.

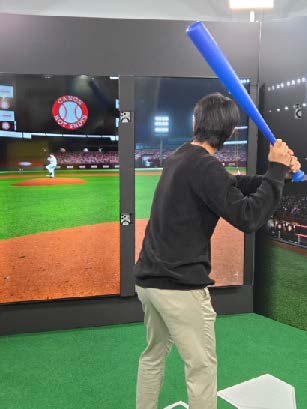

Initial demonstrations have used over 100 video cameras spread across a stadium to record sporting events such as baseball, basketball, and soccer, while the on-site Canon demonstration with AR51 used 16 cameras to enable visitors to be a baseball player in a batter’s box, with their swings captured and created into a multiple-view highlight video.

Japan startup SwipeVideo is also taking multiple video feeds and rolling them together into selectable-view services. The company’s latest deal with Japanese soccer teams will use 11 cameras for reviewers to pick their “favorite camera” for viewing both live and recorded matches, with previous activities including recording concerts at entertainment venues and educational presentations.

Changing the Moving Industry Through AI

Smaller start-up companies are using standardized AI processes such as pattern recognition and machine learning as building blocks for applications and services that will quietly transform commonplace industries for the benefit of both consumers and service providers, saving money and substantially improving the experience for all.

Agoyu is applying AI to the business of the moving industry, enabling households to easily generate moving quotes on their schedule using a smart phone. Traditionally, a mover first must find a prospective customer through online advertising, paying a premium for targeted ad words and geographies. Once the customer wants to get a price estimate for a mover, the firm has to arrange a time to send out a dedicated staff member to go through the household to document what will be moved, how heavy it is, and then generate a shipping estimate based upon the type and number of items to be moved, and how much they weigh. Finally, the estimate needs to be sent back to the customer with a contract for final agreement. The legacy process from getting someone on site, documenting what needs to be moved, and getting a quote sent back to the household usually takes about a week per firm.

“The process of getting a price for your move has been difficult since the moving industry began,” said Bill Mulholland, Agoyu founder & CEO. “Agoyu uses spatial intelligence, it’s seeing the world. You just download the app and it allows the customer to use our free AI scanning tool to scan where they live. Agoyu uses AI to recognize every item in the home and how much it weighs. This is the key piece of information that movers always need to generate a quote. This eliminates the need for in-home inspections, reduces errors, and accelerates the quoting and booking process for both consumers and movers.”

Why have one viewpoint of an event when you can have 100? Canon and others are

combining multiple video streams to build immersive 3-D and AR/VR experiences, such

as this baseball batter demonstration, with the ability to select any angle you want in real

time or playback. Source: Doug Mohney

The homeowner uses the app to take short video clips of the rooms in the house. Agoyu figures out what everything is. A combination of algorithms and APIs generate a shipping manifest and send it out to participating moving companies, immediately returning the price of how much a move will cost from each company, so a household can get up to 25 quotes through one simple process.

On top of that, Agoyu provides mover profiles for all bidding companies, linking to third-party systems providing key date on how many years they’ve been in business, U.S. Department of Transportation information on safety and complaints, and independent customer reviews.

“Our last key feature is a reverse marketplace,” said Mulholland. “The behavior that we were seeing from consumers is once they got the price from all the movers, they contacted them and asked for a discount. Can you do any better on the price? We’ve made that process easier. You just simply hit the button to ask for a discount, and it blasts every mover in our platform and asks them to see if they can offer a better price to win the move.”

Movers get two business days to review the videos of the home, all the inventory lists, the contents of what’s being shipped, when the move date is, and where items will be shipped. Mulholland said customers are saving 20% to 30% off the original rates offered by asking for a discount.

The win for moving companies to participate in the Agoyu marketplace is multifold, explained Mulholland. It costs moving companies $750 to acquire a customer, between purchasing targeted ad words in specific areas and zip codes and having to send out a staffer to visit a household, document what is to be moved, estimate how much it weighs, and then add everything up to generate a cost and a contract. Agoyu is doing the work of finding customers while the combination of household video and AI can generate pricing at the touch of button.

Needless to say, Agoyu doesn’t exist without reliable high-speed connectivity between its household customers who upload video to its servers for AI to process and generate contracts and enterprise broadband between the company and participating moving firms.

The Medical Boost to Broadband Needs

For the visually impaired, AI was providing increased accessibility, with companies using wearables with high-definition cameras and onboard algorithms to process and enhance imagery, identify key features and obstacles. WeWalk has built Smart Cane 2, a mobility assistive device for the visually impaired that accesses ChatGPT through the push of a button, enabling the visually impaired to get turn-by-turn navigation, find public transportation, and provide other hands-free information without having to consult a phone.

While CES is traditionally associated with consumer technology, of late the event has drawn in a wide range of companies looking to showcase their advances. Silicon Valley startup Tiposi dragonfly portable brain imaging system could have a significant impact for stroke care if it lives up to its potential. The helmet-like device uses precision microwave antennas, high-speed DSP processing, and AI to conduct real-time assessment of brain health providing clinicians with the ability to assess stroke patients without a CT or MRI, devices that typically require expensive hardware and a dedicated facility.

Fiber Forward has previously written about telemedicine being used in rural areas for stroke assessment and the use of clot-busting drugs, with a remote on-call specialist providing the subject matter expertise via broadband video link. The dragonfly is small enough to easily be put onto an ambulance and affordable enough to make its way into rural ERs and hospitals, providing rapid and more affordable ways to assess patients. In a world where “Time is Brain,” and lost time means increased damage, this device could be a vital complement to existing and future stroke-focused telemedicine services.